SLS2026 - Popular topics

Debugging

This was a topic that was brought up quite a bit during the conference. One particular moment that stuck in my head was Jeff Bolz during the ending panel (timestamp). I found this problem particularly interesting because I don’t think I have ever had much of a terrible experience debugging shaders in my day-to-day work at Rare. Provided I have shader symbols, the experience in PIX tends to be a good one. The process tends to be:

- Take a capture of your issue

- Find the draw/dispatch that caused your problem

- Pick a pixel/thread to debug

- Recompile that shader with debug symbols using Edit and Continue

- Step through the shader to see what is going on.

What can be a painful experience is getting that capture. Once you have a capture, I feel like GPU debugging is getting quite close to CPU debugging in terms of “happiness”. If the problem manifests as something like a flash or a flicker, that happens very quickly, it can be a nightmare to actually get the capture. I feel like Jeff is talking about these situations.

In which case debug printf would be a great thing… if we had it in HLSL :). Though even this has its flaws. One of the issues that we have in Unreal Engine games at least is that the turn-around time with changing some shader code, recompiling it, and redeploying it can be exceedingly long. If I was to add some debug code to code that is used in the base pass of Unreal Engine, then I essentially have to recook the game.

I genuinely think that something that will help in this department is actually having tests for your shader code. I may be biased in this regard, but it just makes sense. If you can test individual parts of your shader code by checking to see what the behaviour is at boundary conditions, it makes debugging issues much easier. This is literally the reason that automated testing exists for CPU projects. It should exist in the same way for GPU code… especially since our shaders are now getting to be absolutely huge.

Testing

I mentioned testing in the last topic so I might as well get on to this as well. Testing was actually talked about quite a lot and in many of the talks, even if you ignore mine.

- Arcady mentioned that any PRs made to GLSLang needed to be accompanied by tests (timestamp)

- Dan talked about the Conformance Test Suite for WGSL (timestamp)

- Lee talked about a unit test framework in WESL (timestamp)

This was great to hear. It was especially great to see the WESL guys chatting about unit testing shaders, rather than just comparing pixels. I had some good discussion with both Lee and Stefan throughout the conference on the topic of testing, as well as many others.

Strings

I want strings in my shading language. It is why I heavily rely on Shader Printf in my testing framework. But I wasn’t the only one. Chris from Epic talked about OSL (Open Shading Language) which has string support (timestamp). Granted OSL doesn’t run on the GPU, but it is still a shading language nonetheless. I had a chat with (a different) Chris, the principal architect of HLSL, about how strings might be implemented in HLSL in the future. Most of what we chatted about can be found in his recent blog here. This code snippet is particularly exciting:

template <typename... T> void printf(string Str, T... Args) {

uint ThreadIndex = WavePrefixSum(1);

uint ThreadCount = WaveActiveSum(1);

uint StrOffset = __builtin_hlsl_string_to_offset(Str);

uint ArgSize = NumDwords<T...>() * 4;

uint MessageSize = sizeof(MessagePrefix) + (ThreadCount * ArgSize);

uint StartOffset = 0;

if (WaveIsFirstLane()) {

InterlockedAdd(OutputOffset, MessageSize, StartOffset);

MessagePrefix Prefix = {ThreadCount, StrOffset, ArgSize};

DebugOutput.Store<MessagePrefix>(StartOffset, Prefix);

}

StartOffset = WaveReadLaneFirst(StartOffset);

uint ThreadOffset =

StartOffset + sizeof(MessagePrefix) + (ThreadIndex * ArgSize);

StoreArgs(DebugOutput, ThreadOffset, Args...);

}Variadics and strings… Exceptionally exciting!

Personally, I feel like there is no reason to not also support char and a string_view-like structure in HLSL. If I can have an int[] why can’t I have a char[]? Even if we didn’t get that though, this type of thing looks like it would work well enough.

Visual Node Graphs

Everyone loves a node graph! And there were two talks this year which talked about using node graphs in two different but related domains.

Shader Node Graphs

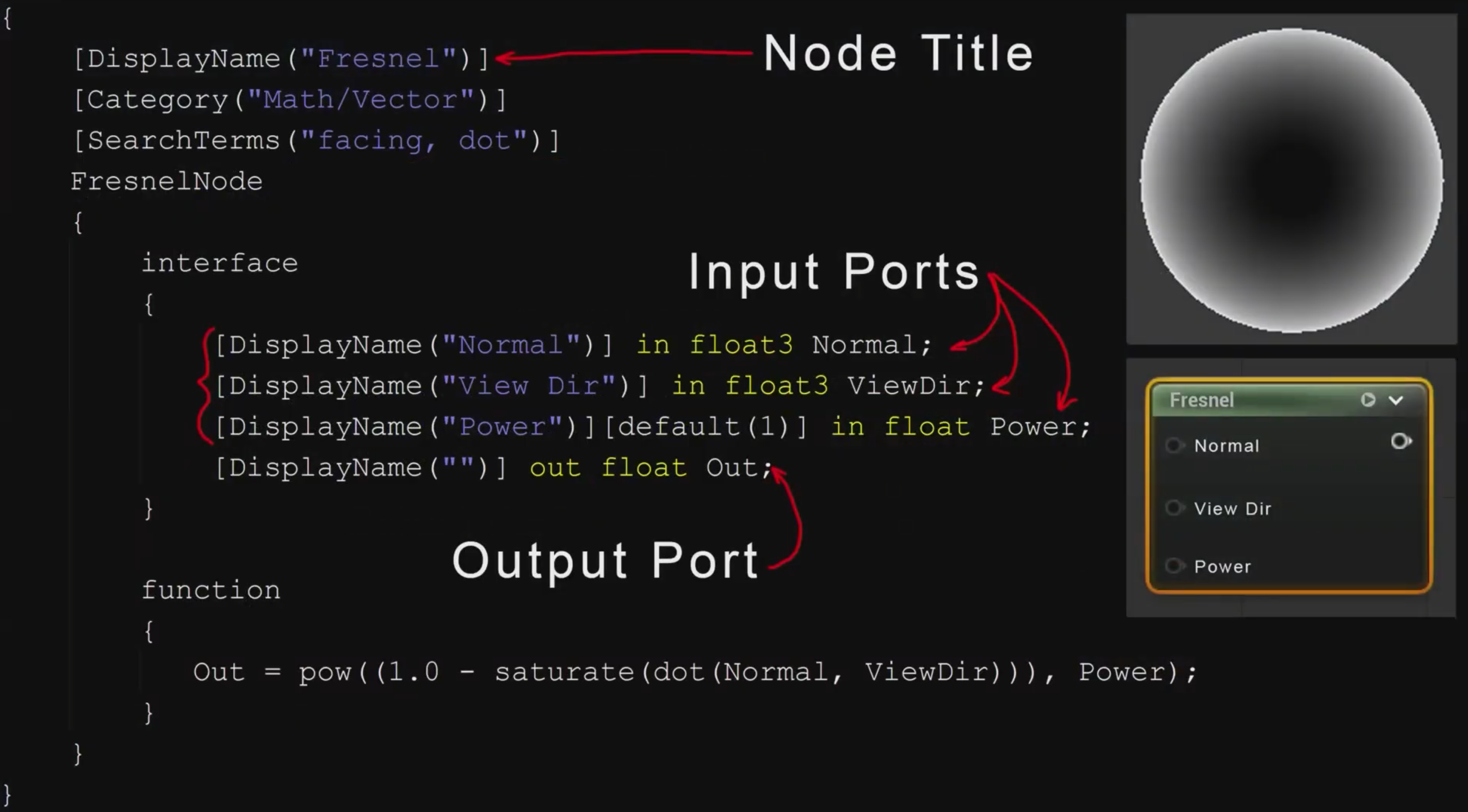

Ben Cloward had a great talk on how having a node editor for writing shaders saves everyone a huge amount of time (timestamp). Coming from a decade of working in Unreal Engine, this all made a lot of sense to me. Most of our shaders are authored as Materials using the UE Material system. I think that one of the more interesting topics that Ben brought up was the concept of making our shading languages aware of node graphs (timestamp).

Note

I couldn’t help but note that this syntax was quite reminiscent of Unity’s ShaderLab

I think that this was a really neat idea. I think Ben was quite right when he suggested that this wouldn’t need to be just for node graphs either. It could literally just be a custom annotation system that node graph systems could reflect on to generate the nodes from the actual shader code. I think that this would be a really cool addition to any shading language!

With this all being said, Chris Bieneman brought up a point that I am sure a lot of people wouldn’t be so thrilled with currently, during the open panel (timestamp). It is quite possible that node graphs, as a tool, might get replaced with LLMs. The whole point of node graphs is to make writing shaders easier for artists, and to provide a quick way to prototype effects. They give a nice visual interface to allow generating shader code to be easier and to also limit access to footguns.

Note

As an engineer, I personally dislike node graphs. They are difficult to review. They are difficult to test. They literally add a whole new dimension (because your nodes have actual 2D Coordinates in space) to the problem of code organization. They require a specific engine to actually open and read… You get the point. I can’t deny their utility. They are fantastic tools. I personally just dislike them and when I get a render bug, and I track the problem down to a material, I despair.

However, that is exactly what LLMs are making great progress in, and in some ways they could be better than node graphs:

- LLMs allow people to specify the code they want to write with natural language. This may be more convenient than having to create and link up nodes in a node graph.

- LLMs will produce human readable shaders, that don’t require an engine to actually see. Much easier for rendering engineers to actually review.

I am not saying that this is the world that we live in now or the world that will exist in the near future, but this is absolutely a possibility. And with the idea of having ways to annotate our shaders in the code, we would be able to provide nice interfaces for artists to tune their shaders and materials with constants.

Render Node Graphs

Alan Wolfe, gave a great presentation on gigi. Gigi is a visual node editor, but instead of its output being a shader, it outputs a render graph. Gigi takes the whole concept of a render graph to its logical conclusion; by making render graphs an asset that can be loaded, edited, and executed. What this means is that people can use Gigi to prototype entire render pipelines quickly and easily using a visual node based system. One of the really cool parts of gigi is in what it can output. It can generate render graph code for WebGPU, DX12, and UE5. So, if you are wanting to create a rendering system to use in your UE5 game, you can use gigi to do some rapid prototyping of the feature, export it to UE5, and then load it in your plugin. Super cool idea. You can watch Alan’s talk at this link

Although not presented at the Shading Languages Symposium (though it was presented at Vulkanised a few days prior), I feel like vkduck deserves a mention. This was presented by Lamies Abbas, and it was a fantastic talk about a similar project to gigi (in fact gigi is mentioned as an inspiration for the project), which was born out of the fact that just getting a triangle to render in vulkan is hard. In this talk Lamies talks about the motivations of creating vkduck (github link), and also goes into the implementation of it.

Shareable Shader Code

There was a lot of talk around how we can get better at writing libraries for shader code in the same way that we write libraries and frameworks for CPU code. This was something I also touched on in my talk.

Note

If we have the ability to write testing frameworks that run wholly in our shading language then we can write modular, shareable, and testable libraries

The only issue with HLSL is that with HLSL we have the concept of Include Handlers which means that includes are inherently non-standard… This can be seen in Unreal where they have their own way of managing shader paths with Virtual Shader Directories. This is a bit of an issue. However, with HLSL’s desire to be more like C++ perhaps there is the possibility of also getting C++ modules to solve the header problem… Though I would hope that HLSL modules would get a little more adoption than C++’s…

Note

You know C++ modules are in trouble when 5 years after standardization, there are still talks being made talking about their struggle with adoption. Link

Slang uses a module system for exporting and importing shareable units of code. I have only written slang in godbolt but from what I can see, it is quite like C++ modules. You can hear more about Slang’s modules from Shannon at this timestamp.

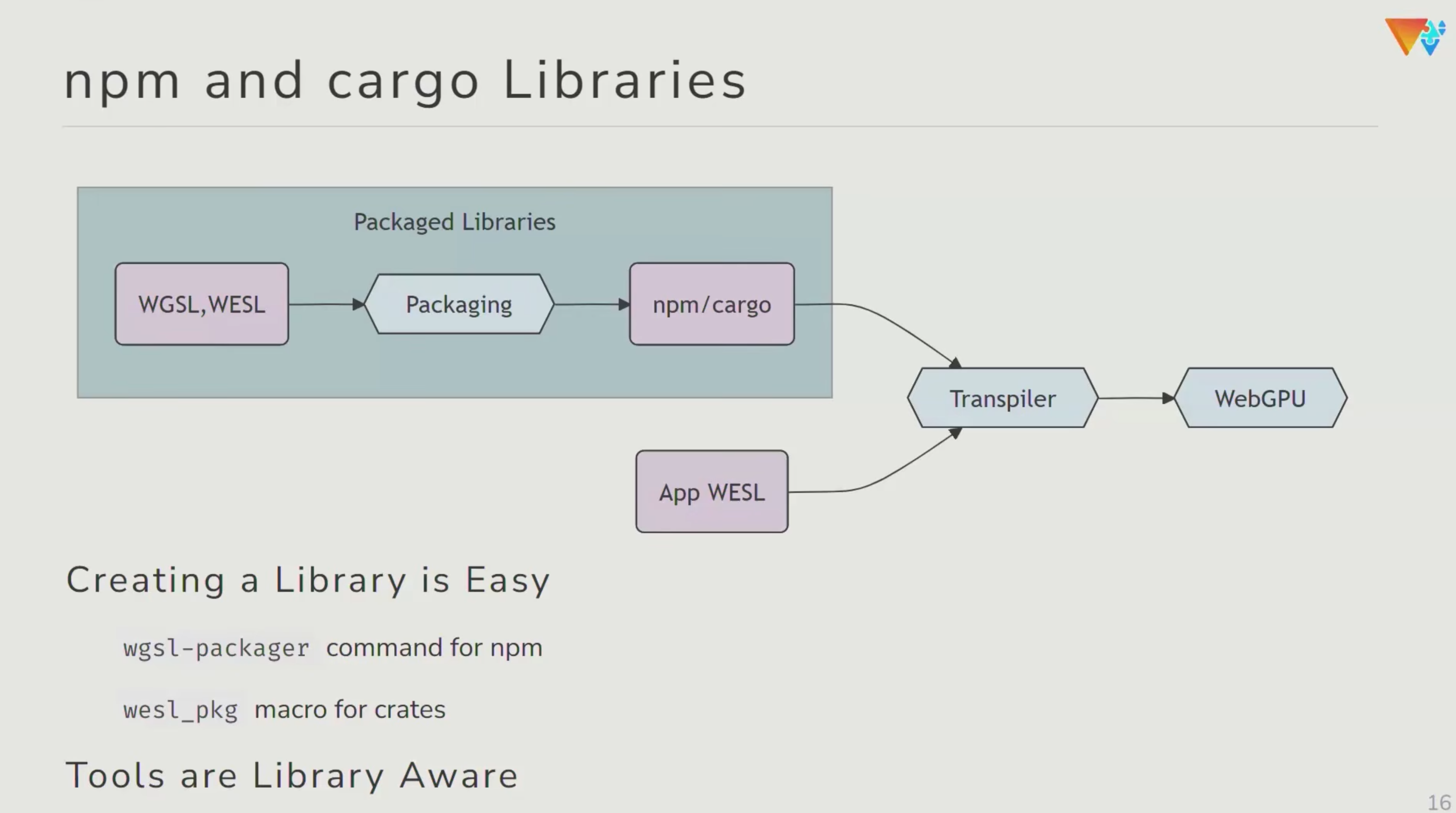

WESL was very interesting to see with regard to package management. WESL is built of browsers so it is very much in a Javascript ecosystem. And as such, it is able to just use a package manager like npm to import libraries while in the editor, and it just magically works.

It was really cool to see this happening in a live demo. Even with modules, languages like Slang and HLSL seem a long way off having package manager support in the same way that WESL has. But it is certainly something to aim for!

OSL (Open Shading Language) is built on the idea of small shareable units of code. With OSL, each shader can be thought of as a library called a “Node”. Although you can have one complete shader with a single node, typically they are made of a network of these nodes (timestamp).

The chat around shareable code continued into the open panel and was actually the first topic of discussion which you can find here

Shading Language History

A lot of the talks in the symposium talked about the history (and future) of various shading languages. We had talks in the symposium with names like GLSL: Origins…, HLSL: Decades in the making, and WGSL: Past, Present and Future. These were all really interesting and I learned a fair amount of cool trivia:

- HLSL is technically older than GLSL. I think I just assumed GLSL would have been older.

- WGSL (and by extension WESL) exists because other shading languages are not deemed to be secure. WGSL is built for the web where any input could be an attack

- Unity is still clinging to FXC. Unity use FXC for everything

Intellisense

I mentioned in my talk that we are sorely missing intellisense-like features (timestamp). And from listening to many of the other talks, this is absolutely an important feature, that other languages are very proud of claiming that they have in their language. Here is Shannon talking about it for Slang, and Stefan doing the same for WESL. I am hoping again that HLSL will get the same niceness when HLSL in Clang is all working :)

“Writing compilers is easy!”

This was said so much during the conference from so many people that I almost started to believe it. It was said in many of the talks (example) and some of the reasoning I got from people is as follows:

- Most compilers all follow the same general structure

- If your compiler isn’t deterministic, there is a bug

- Problem space is actually quite narrow.

I will accept that this is all likely true, but I am also aware that I was in a room with probably some of the most experienced shader language compiler engineers in the world. So it probably should be taken with a grain of salt as to how “easy” it is. However, it certainly did make me wonder if I could hack something into HLSL with DXC/Clang with a little elbow grease. It certainly seemed to be something that actual people were doing. For example, Hugo got so annoyed by the fact that he couldn’t have some features in available shading languages that he went and made his own compiler.

This was a great talk and you can find it here

“Should we ditch shading languages and just make GPU C++?”

There were two talks that demonstrated a want for this:

- Abstraction done right, first do no harm -> Presented by the folks at DevSH. They got tired of the friction from having to write both C++ and HLSL, so they made the Nabla library which aims to provide a way of writing shaders and CPU code with a single source language.

- Keep it DRY: From C++20 to GPU Compute -> Presented by Koen Samyn from Howest. He gave a very similar talk at CppCon 2025. The main issue that Koen is facing is that he is having to teach graphics programming to students which requires teaching both C++, graphics APIs like OpenGL and D3D, and shading languages. These topics are hard, so to simplify the cognitive load on his students he has made a framework to allow students to write shader code in C++ and have Clang transpile the C++ to GLSL to be consumed by his framework.

Both of these talks express the want for C++ to just work on the GPU. This was also a question in the open panel discussion (timestamp). Chris Bieneman took this question, I assume because HLSL is moving towards having more parity with C++. Despite this direction, Chris admits that three are C++ features that probably should never be on the GPU (e.g. Exceptions). I personally agree with Chris on this to some extent. However, the elephant in the room is CUDA. CUDA might not support all the C++ features but it certainly supports most! So, if CUDA can do it, why can’t other shading languages? I feel like the answer here is in the problem domain. CUDA is solely meant for GPUGPU programming. It has no concerns around graphics programming.

My opinion on this is that as long as shading languages are distinct from C++, we should not be equating them or trying to treat them as the same as C++. At the end of the day, C++ and shading languages operate on different hardware (usually. Some shading languages are very much CPU only) to solve different problems. Trying to make common headers is only asking for pain when differences in the languages crop up. E.g. Something as simple as a bool is different fundamentally between HLSL and C++.